If you’re reading this you probably have some idea what HDR is, what it means. If not, you can read up on it here. If yes, you’ll probably agree that HDR is still pretty new and mostly unknown to the mass consumer. It’s fair to state objectively that HDR is common in all TVs sold currently and is making inroads in the installed base of TV sets at a predictable rate, given the stable replacement cycle of TVs. It’s also fair to state that while HDR is quickly gaining acceptance in Hollywood movies and productions of streaming platforms, it’s virtually nowhere yet in broadcast TV.

Naturally and understandably, a lot of people who buy an HDR TV want HDR content to show off the capabilities of their new set. They may want to impress their friends, wow their relatives or simply enjoy the new possibilities they’ve got and justify the purchase. Some movies will be more suited for that than others.

Fake HDR?

Vincent Teoh of HDTVTest nowadays runs a YouTube channel with lots of videos about UHD TVs, display technologies, and UHD movies. Lately, he – together with Adam Fairclough – has produced a string of videos where he checks how bright HDR movies get in terms of nits. He’s been evaluating The Mandalorian, Star Wars and Mulan on Disney+ and Mad Max: Fury Road, Star Wars: The Rise of Skywalker, Star Wars: The Last Jedi, Blade Runner 2049, The Matrix, The Greatest Showman, Wonder Woman, Alita: Battle Angel as well as Rogue One: A Star Wars Story on Ultra HD Blu-ray. Some of these such as The Matrix have a high luminance level that peaks at over 1,000 nits while others such as most of the Star Wars movies and Blade Runner only get to about 200 nits. This latter category Vincent calls “fake HDR”. Is that right? The creators beg to disagree.

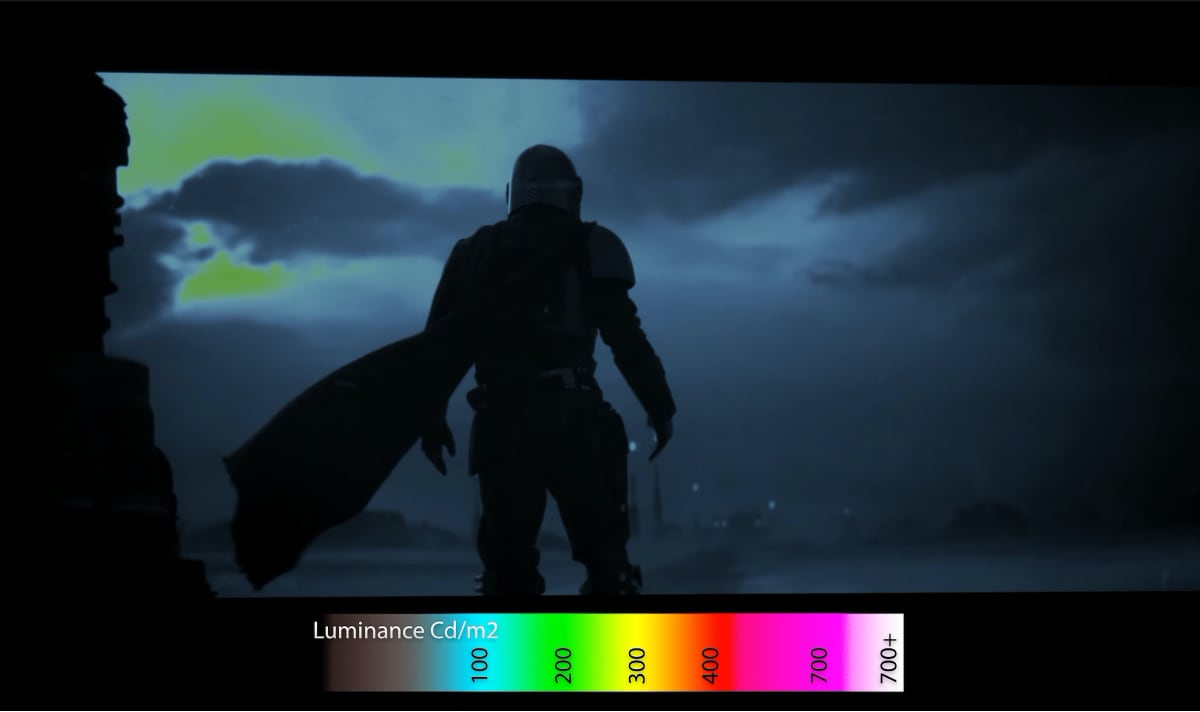

False-color 'HDR heatmap' image of The Mandalorian showing the luminance levels of all picture elements. Image credit: Disney+ / HDTVtest

The issues as stake

To begin, let’s please get one common, deep-rooted misunderstanding out of the way: that HDR is about overall brighter pictures. That's just wrong. It's a wider range of luminance, so deeper blacks and brighter highlights, especially specular highlights – light reflecting off shiny objects.

Another question is where to draw the line? We may think of 1,000 nits as bright now but in a few years 10,000 nits may be achievable. Will we look at 1,000-nit movies then as 'fake' HDR? Let’s hope we won’t. The only line that can be drawn objectively is SDR’s 100 nits. Anything above that in principle is HDR.

Of course, as noted above, consumers don’t buy an HDR TV to see something that looks a lot like SDR. But 'fake HDR' versus 'real HDR' is a wrong way to frame this distinction. HDR is not unlike other video and audio innovations. Let’s take color TV. People did not buy a color TV to watch black & white programs, but decades later some black & white movies are still being shot because over time, after color TV had become the norm, we’ve come to appreciate the artistic merit of black & white films. It’s the creative intent. Even when screened on a color TV (or an Ultra HD one), we don’t consider it a 'fake color movie'.

Roma is not a fake color movie or 'fake WCG' – it’s a gorgeous black and white movie in a color container that it uses only partly. Oh and by the way, it looks stunning in 4K with Dolby Vision HDR. HDR is not just about “more vivid colors”, you see?

Also, movies don’t have to use the most saturated red, greens or blues to qualify as a color movies. All movies with colors are color movies. Some more colorful than other, but obviously that can't be used as a measure of quality.

“But then they shouldn’t call it HDR,” some will argue. Apart from the fact that technically it qualifies as HDR – it exceeds SDR – in Disney’s defense they’re not emphasizing the HDR aspect in their marketing. It’s The Mandalorian and yes, in the small print you can see that it’s delivered in 4K with HDR, Dolby Vision even if you’ve got the right hardware, but it’s not called Nits Wars, or 'The Mandalorian in HDR'.

Creative Intent

There are many ways to use HDR but at the extreme ends there’s a subtle approach that stay close to what we’re used to and an aggressive approach that delivers eye-popping, nearly blinding video. HDR offers creators a more sophisticated canvas (or, if you will, an enhanced paint box) and some consciously choose to use it in a very restrained way. Here’s what Charles Bunnag, one of the colorists/finishing artists on the team that did The Mandalorian, had to say about this:

It was done with true HDR with a creative intent to not go super bright. Whether someone thinks this is bright enough or not is welcome their opinion. My main issue with this article and similar critiques I have seen lately is that they are taking guesses and presenting them as fact. They could have simply reached out to someone and asked. This leads me to another concern I have been encountering lately, using only technical numbers to judge and guide creative intent. While art and technology have a tight relationship in our field, having a deep understanding of technology and technical processes does equate to making well informed artistic decisions. In this case, it seems like what matters to the “not HDR enough” group is hitting certain numbers first, rather than what supports the best narrative and creative intent.

I also find the argument that the “image doesn’t go bright enough” for their tastes as strange as if they said a sound mix isn’t truly surround sound because the surrounds are not active all of the time and blasting at full volume.

That analogy is exactly what also came to my mind. Especially Disney often gets criticized for delivering Dolby Atmos mixes, jokingly referred to as 'AtMouse', that don’t make your house rumble. Does that make it fake Atmos? No, it’s just an artistic choice. There’s been the same sort of discussion with DVD-Audio and Super Audio CD* and no doubt with Dolby Digital and every surround sound format before and after.

In fact, the audio analogy goes back way further, to the early days of stereo sound, which became available to consumers in the sixties. If you’d bought a set, of course you wanted to hear it, and not something that was indistinguishable from mono. That’s where the term 'ping pong stereo' comes from. Demo records from those days used stereo to extreme effect. Also stereo music from that era typically uses extreme channel separation, very different from what we’ve gotten used to later, when stereo became mature.

And therein lays perhaps the answer. What consumers need at the moment is some showcase HDR content, and while some may feel movies like the Star Wars Saga and series like The Mandalorian lend themselves excellently for this, the creators at Disney choose otherwise and that’s their prerogative. Colorist Alexis van Hurkman has some more technical considerations, based on how HDR TVs work, as to why grading content overly bright may be a bad idea.

It's probably not even fair to single out Disney here. As you can see from the videos, many other movies take the subtle approach. Movies Sicario and Blade Runner 2049 with their fantastic visuals (both directed by Denis Villeneuve) were shot by renowned cinematographer Roger Deakins. Here’s what he says about preparing home media releases with HDR:

I felt like I had to suppress a lot of the contrast because sometimes the highlights were so bright you couldn’t actually see things. For example, there was a scene with somebody standing against a window, and you couldn’t see the person’s face because the highlights outside were so bright. So, a lot of it is suppressing highlights and actually putting less information in shadows when you don’t really want (to see) in there.

The scene he’s talking about is probably this one:

Picture credit: Stu Maschwitz

On his own website he writes:

If we had not done a separate timing pass for 'Sicario', the HDR version would have been like watching a completely different film. If you create a balance of light and dark on set you expect that balance to be maintained throughout the process. I personally resent being told my work looks 'better' with brighter whites and more saturation.

And furthermore:

I wouldn't see a reason to change how I shoot for HDR as I want the projected image to have the overall contrast and tonal balance that I have shot. That is why I did a separate HDR pass for 'Sicario' and I will certainly do the same for any future project. Yes, the black being so black in projection is a good option as is the intense brightness of the highlights, though less so in my mind. But I see it only as an option.

Here you see creative intent. Many filmmakers and cinematographers just want to tell a beautiful story. They will put the tools at hand to good use to improve the visual storytelling but in a conservative way – no radical break from past. They may not care how many nits your TV can render because they don’t intend to turn up the brightness to eleven.

An HDR TV must be capable of reproducing deep blacks, high brightness (at the same time – that’s what makes contrast), a wider color gamut, and deliver on other picture parameters as well. The display serves as an enabler for whatever content you throw at it. It should do what the signal tells it to do so ideally it should have plenty of 'overhead' capacity. If it gets used to the max all the time then there is no headroom.

Legacy

Another aspect to consider is history: Many of the movies that people complain about being "fake HDR" are part of a legacy. Star Wars has a very particular look, sound, ambience, and feeling. You shouldn't want to change that just because it's technically possible. The Mandalorian is an extension of this 'universe'.

A similar point can be made for other parameters like High Frame Rate (HFR). Just because UHD permits 100/120 frames per second doesn't mean every production should use them. A lot of people might dislike movies that do. Over time, content creators will use these new possibilities to create new, fantastic looks for new movies, series or games, but we don't want to force it down over history.

Where to go from here

Fortunately for new HDR TV owners, other filmmakers like to go for maximum sensation. If you want to test your HDR TV’s peak luminance you could watch Wonder Woman, Mad Max: Fury Road, The Matrix or The Greatest Showman on 4K HDR Ultra HD Blu-ray, or even Disney’s Mulan (on Disney+).

The bigger 'canvas' and extended toolbox give creators more freedom to create the desired look. Some decide to go big and bold; others decide to go in a different direction. We should not demand that all content creators go in the same direction. Just because a TV can display 4K or even 8K doesn’t mean that all content must be razor-sharp. It is entirely up to content creators if they want a soft look, a faded look, a desaturated look, a colorful look, a psychedelic look, or whatever they think is best in a given situation.

We need to stop talking of 'fake HDR'. It’s not fake. If we use that terms at all let’s please reserve it for cock-ups like the Red Dead Redemption 2 game, where they faked HDR by turning up the brightness on SDR by means of a way too simple formula.

Let’s instead talk about showcase HDR and subtle HDR.

Multichannel music

I’ve been Product Manager SACD at Philips. The high-resolution/multichannel successor to the CD was launched in 1999. I vividly recall the debates taking place on internet forums in the early days. SACDs can be high-res stereo or high-res multichannel, “5.1” if you will. Sony was predominantly interested in high-resolution (catering to audiophiles), Philips in multi-channel (appealing to the mass-market). To some early adopters (and undersigned) SACDs are of moderate interest if they're stereo-only meanwhile many audiophiles don't care about multichannel and only play the stereo track (that is present on 99.9% of all multichannel discs).

There are essentially two ways to use surround channels for music.

- Classical music, jazz and other genres that are performed acoustically use multichannel to achieve the most faithful reproduction of that live performance. The surrounds mostly carry reflections and reverberations from the hall, and the noises from the crowd. It’s subtle.

- Pop/rock music on the other hand, is usually recorded in a studio and the balance is created entirely on the mixing table. Here, the artists or producer can create elaborate soundscapes and the use of the surround channels may be ‘aggressive’.

With DVD-Audio, this is the same as with SACD, except this format was much more skewed towards pop/rock. After the first few years however very few DVDA titles have been released. SACD on the other hand kept going and going, year after year, surpassing 10,000 titles a couple of years ago and continuing to see new releases to this day.

Around the year 2003 however, the major record companies withdrew and boutique labels stepped in. These labels mostly release classical music, some jazz, and little else. Pop/rock releases have become rather rare. So in the end the vast majority of releases we've got offer stereo and very subtle, natural surround sound.

Sure, it’s a niche market. But the interesting thing is that most currents users of multichannel music set-ups are not looking for music that blasts sounds from all directions.

There are other interesting lessons to be learned from this chapter in history. SACD and DVD-Audio of course aimed to achieve the same thing and targeted more or less the same people. From the beginning it’s been framed as a format war, which probably scared away many prospective buyers and made them adopt a wait-and-see approach, expecting one of the two formats to win. The formats were not mutually exclusive however: multi-format players were possible and started to appear on the market after the first few years. By this time however it was too late: The damage had been done.

That’s why it’s important to keep in mind that the competing HDR formats are not engaged in a format war. Multi-HDR support is possible. In fact it can be realized on the hardware side as well as the content side (except for broadcast). So please don’t call it a format war. |

FlatpanelsHDYoeri Geutskens works as a consultant in media technology with years of experience in consumer electronics and telecommunications. He writes about high-resolution audio and video. You can find his blogs about Ultra HD at

@UHD4k and

@UltraHDBluray.