The road to TV heaven

After decades of slow but steady innovations in TV technology – the introductions of color TV, stereo sound for TV, Teletext, 16:9 widescreen TV, digital TV, flat panel displays and HD TV were many years apart – we’re now witnessing a raft of video and audio innovations in a relatively short period of time.

There are essentially six developments taking place at this moment, most of which come with a three-letter acronym:

- UHD or Ultra HD – increased spatial resolution

- HDR or High Dynamic Range

- WCG or Wide Color Gamut

- Deep color resolution

- HFR or High-Frame Rate

- NGA or Next-Generation Audio

Some other developments dealing with audio/video encoding or transport rather than the A/V itself are related to this and taking place in parallel. Let’s look at each of them one by one.

Spatial resolution

About ten years ago, the TV industry started making the switch from what we now call SD (Standard Definition) TV to High Definition or HD TV, increasing the number of lines from 480 in 60Hz/NTSC territories like USA and Japan, or 576 in 50Hz/PAL areas such as Europe, to 720 or 1080 lines, while optionally also going from interlaced to progressive video. The ultimate resolution for HDTV is 1920×1080p.

With a clear trend of consumers buying ever larger displays (while not increasing the viewing distance) and TV manufacturers looking for new features to sell TV sets and offset a downward price trend, the need was felt to introduce Ultra HD – a resolution of 3840×2160 i.e. twice the horizontal and vertical resolution of 1080p HD, resulting in four times as many pixels. UHD TV sets were first introduced into the consumer market in 2013 and have gained adoption fairly quick – quicker than HDTV sets a decade earlier, according to market researchers. However, adoption has not yet been as fast and widespread as hoped, so TV makers are looking for additional ways to sway consumers.

Dynamic range

One such way is High Dynamic Range – not the same technology as the one by the same name used in photography but aiming for similar goals. There are various technical solutions for implementing HDR. The most prominent ones are the open HDR10 standard, Dolby Vision by Dolby Labs, a joint proposal by Technicolor and Philips, HDR10+ by Samsung, and Hybrid Log Gamma (HLG) by the BBC and NHK. Some of these solutions involve dynamic metadata, others static metadata or no metadata at all. There are single-layer and dual-layer solutions with different implications for compatibility with legacy devices. For a backgrounder on HDR, check The State of HDR mid-2017.

Color gamut

Wide Color Gamut means the adoption of a larger color space than BT.709 that was specified for HDTV. For UHD TV, various organizations have standardized upon BT.2020. HDR more or less requires Wide Color Gamut, although the use of WCG does not automatically imply HDR. Expect any UHD TV on the market now that doesn’t support HDR to support WCG.

Color resolution

In theory a wider color gamut and higher dynamic range would be possible with the existing 8-bit color resolution but the risk of color banding would be looming large. Both WCG and HDR are greatly helped by the transition from 8-bit to 10-bit and 12-bit video. The latter is mostly used in production; the former in distribution to consumers. Note the number here refers to the bit depth per subpixel i.e. 8, 10 or 12 bits for R, G and B each, resulting in 24, 30 or 36 bits per pixel.

Frame rate

Where initial Ultra HD TV sets and broadcasts were at 25 frames per second (in Europe) or 30 fps (North America), the current state of the art is double that – 50 or 60 fps. That’s not going to be enough, however, and it’s not what is referred to as High Frame Rate. With increasing spatial resolution, the risk of motion blur increases as well. The cure for this is further raising the temporal resolution to 100 / 120 fps. This is what’s called HFR. It is estimated that a doubling of frame rate leads to a 50% increase in bandwidth for the compressed video signal – so not 100% – because compression efficiency increases with the frame rate.

Immersive audio

The best quality level for broadcast surround sound until recently was 7.1-channel Dolby Digital Plus. Although higher channel counts are possible for a more immersive experience, Dolby (and DTS) have taken a different approach, first pioneered in the cinema but more recently adapted for home use: object-based audio, where sounds can be place anywhere in space and speaker configurations are far more flexible and scalable. Dolby Atmos for the home allows for up to nine speakers at base level, up to 4 height channels and an LFE channel for a subwoofer.

Dolby Atmos is already available for home theater on selected titles on OTT streaming video platforms like Vudu and on a few dozen Blu-ray Discs. It’s not restricted to the new Ultra HD Blu-ray standard but works with the ‘old’ BD standard as well. In the UK, BT TV has already begun broadcasting football matches in UHD with Dolby Atmos sound, live.

Encoding

You may have read that with UHD, new compression standards have been introduced. Although UHD encoding is possible with MPEG 4 AVC (also known as H.264) and some of the early test channels were using this, a more efficient standard called HEVC or H.265 has been standardized and decoder chips for TVs and set-top boxes as well as encoder hardware and software for broadcasters are now commonly available so expect all future UHD transmissions (and also many HD ones) to be in the new HEVC standard.

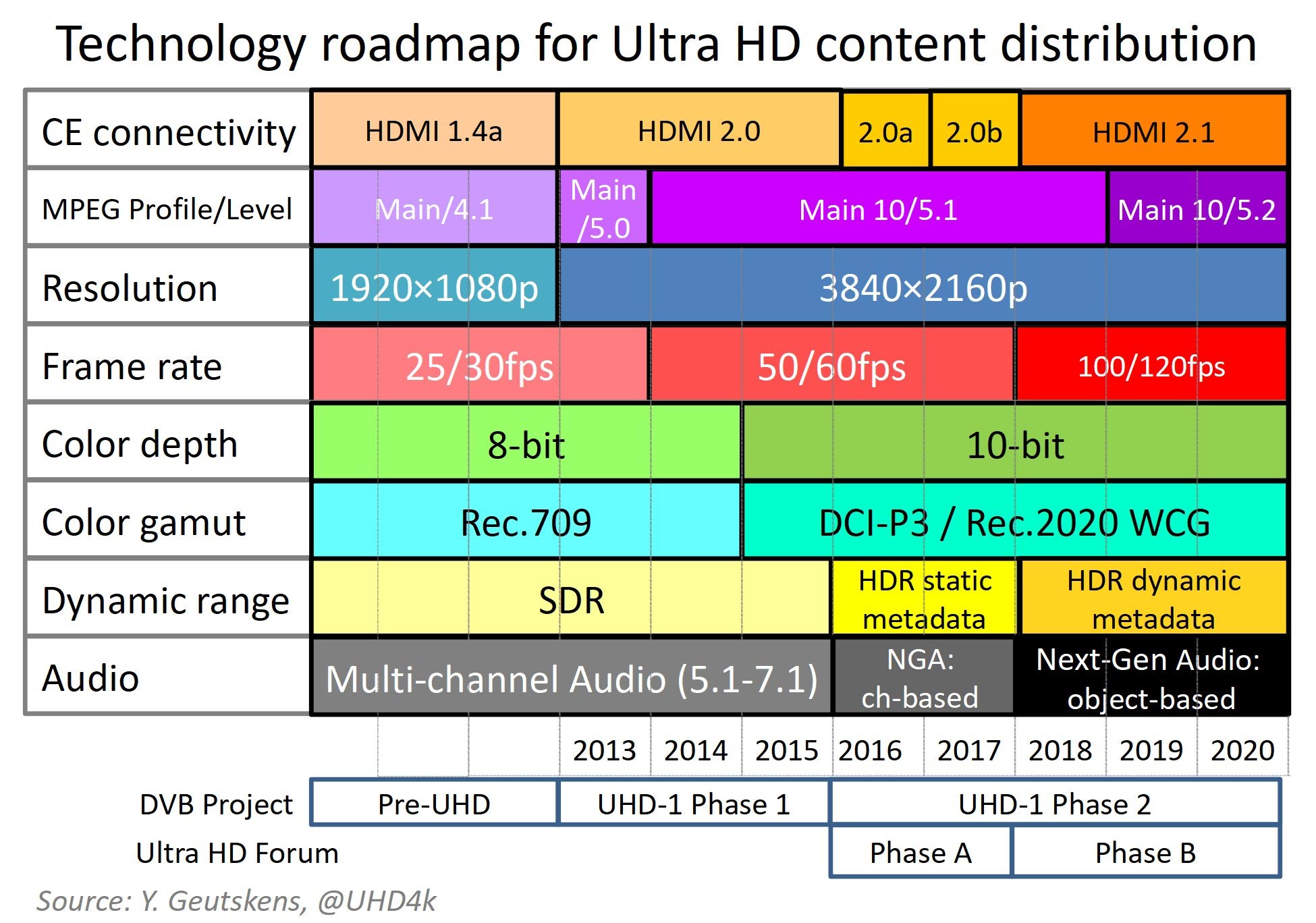

At a lower level, there are different flavors of MPEG and HEVC called profiles and levels. 1080p HD used Main profile, level 4.1. For UHD, we need Main profile, level 5.0. 2160p50 and 2160p60 meanwhile require ‘Main 10’ profile, level 5.1 while HFR demands Main 10 profile, level 5.2.

Connectivity

Digital TV sets can get video signals from external devices such as set-top boxes and media players through HDMI. Version 1.4a was good enough for HD and even for UHD so long as the frame rate was no higher than 30fps. HDMI 2.0, first introduced in 2014, raises the capability to 60fps for UHD. It also enables a newer version of HDMI’s content protection technology, HDCP 2.2. Ultra HD Blu-ray players and BT’s Ultra HD STB require HDCP 2.2-compliant TV sets.

HDMI 2.0 does not support HDR but version 2.0a supports HDR10 and v2.0b supports HLG in addition. Fortunately, sets can be upgraded from 2.0 to 2.0a with a software update. Upgrading from v1.4a is not possible.

In principle HDMI 2.0 offers enough bandwidth for HFR video but not at the full 3840×2160 resolution of Ultra HD – only at an intermediate resolution of 2560×1440 (the quadruple of ‘720p’), common in PC/monitor environments. That’s one of the main reasons HDMI 2.1 was conceived. With its 48 Gbps bandwidth it can handle frame rates of 120fps at resolutions of 4K and well beyond. In environments other than TV new interfaces like DisplayPort on USB-C, or MHL may find their way.

Timing

The above innovations are not arriving to the market with a single big bang. Instead, they’re trickling down gradually, bringing the possible quality level a little further each year, step by step. Standards-defining organizations (SDOs), notably the DVB Project and the Ultra HD Forum, are working to ensure these features are not only specified properly but also introduced in a coordinated way with a phased approach.

Beyond UHD-1

Even with UHD + HDR + NGA + HFR, progress doesn’t come to an end. Although some are debating the benefits of UHD for living room use, others argue this resolution is not even big enough. The Japanese industry in particular, with support from the Ministry of Internal Affairs and Communications, is actively pushing for what they call 8K – arguably a catchier name than UHD-2, even though the resolution for this will be 7680×4320p, not the cinematic 8192×4320p resolution.

The switch to UHD-2 has such repercussions throughout the entire capture, production and delivery chain that few outside of Japan are prepared to even talk about it. LG, Samsung, Sharp and several Chinese manufacturers have showcased several ‘8K’ displays at CES but apart from being technology statements, the only practical use for these products in the foreseeable future is likely going to be digital signage applications and monitors for graphic designers, professional photographers, etc. who generate their own content.

Some argue (reportedly, Sony officials have) that the only point of 8K is VR and the ability to do autostereoscopic i.e. glasses-free 3D with an effective resolution of 4K. They may have a point.

Now what?

It’s clear the technology for TV perfection is not in place yet. It’s not even crystal clear yet when each of the elements is going to be in place, or how all challenges will be solved. Should consumers wait until all this is clear? They’d better not, or they might have to wait for many years to come. Moreover, if consumer stopped buying TVs, there soon would be no TV makers left. There will always be something better on the horizon than what you can find on retail shelves. Especially where it comes to ‘8K’, the industry (in particular the CE industry) should be careful managing expectations, or consumers may postpone their next purchase for too long.

We need to be prepared for an installed base with strongly varying capabilities. There will be SD, HD and UHD televisions. The UHD TV park will consist of legacy sets, sets with WCG (and SDR), and sets with HDR as well as WCG. This fragmentation will get worse when HFR arrives.

Ultra HD Blu-ray players and STBs for services like BT Sport Ultra HD require HDCP 2.2 to deliver UHD. Early adopters who bought their UHD TV in 2013 or even in 2014 can only get HD from these sources, which will be a disappointment. Depending on the implementation, HDR signals may or may not work on SDR TV sets, and if they do it may work only for sets that employ WCG, although that’s the vast majority of the installed base of UHD TVs now.

Ultra HD Blu-ray players are flexible to adapt from HDR to SDR and from UHD to HD. For OTT streaming providers like Netflix and Amazon Prime Video it will be relatively easy to adapt to the diverse client base, although they will see the number of encoding profiles multiply. For broadcasters it’s hardly an option to cater to each segment of the user base with an individual signal tailored to each user’s hardware. They simply can’t afford the bandwidth – especially for terrestrial transmission, but also for satellite and cable distribution.

Conclusion

What this means is it’s essential with the introduction of each new future feature to maintain compatibility with existing equipment as much as possible, and ensure graceful degradation where needed. The TV industry can neither afford to alienate the early adopters, nor to cut ties with the mass market.

Footnotes

About the chart:Facts:

Speculation:

Yoeri Geutskens is a freelance media technology business consultant. He has worked in consumer electronics for over 15 years and writes about high-resolution audio & video. You can find his blog about Ultra HD and 4K at @UHD4k and @UltraHDBluray.